Feedforward Neural Network example with Simple Python

Example: Simple Feedforward Process (1 hidden layer)

Let’s say we have 2 inputs and 1 output:

- Inputs: x1,x2

- Hidden Neurons: h1,h2

- Output Neuron: o

Forward Flow:

Input Layer: x1 x2

\ /

Hidden Layer: h1 h2

\ /

Output Layer: o

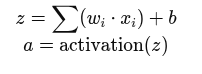

Each connection has a weight, and each neuron applies:

Python Simulation (Simple Example)

import numpy as np

def sigmoid(x):

return 1 / (1 + np.exp(-x))

# Inputs

X = np.array([0.5, 0.8])

# Weights and biases for hidden layer (2 neurons)

W_hidden = np.array([[0.1, 0.3],

[0.4, 0.2]])

b_hidden = np.array([0.05, 0.1])

# Weights and bias for output layer

W_output = np.array([0.6, 0.9])

b_output = 0.2

# Hidden layer calculation

z_hidden = np.dot(W_hidden, X) + b_hidden

a_hidden = sigmoid(z_hidden)

# Output layer calculation

z_output = np.dot(W_output, a_hidden) + b_output

a_output = sigmoid(z_output)

print("Predicted Output:", a_output)

Summary Table

| Concept | Description |

|---|---|

| Feedforward Flow | Data moves from input → hidden → output |

| Layers | Input, Hidden (optional), Output |

| Weights + Biases | Parameters learned during training |

| Activation Functions | Add non-linearity (e.g., ReLU, Sigmoid) |

| Loss Function | Measures prediction error |

| Gradient Descent | Optimization method to reduce loss |

Next – Hidden Layer Influence